Microsoft Finds GPT-5 Fails Against Implausible Attacks

Microsoft researchers generated 30,000 adversarial strategies — including fake treaties ("Geneva Coffee Convention legally requires $2 per bean") and invented emergencies — that consistently bypassed AI agent safety defenses. The attacks work because they are out-of-distribution: safety training is anchored to threats humans would fall for, leaving a structural blind spot against implausible-but-novel arguments. Even frontier models like GPT-5 showed measurable vulnerability.

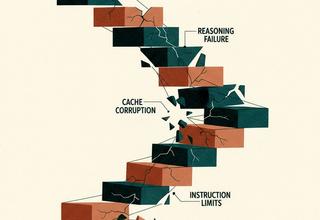

Generative imagery · ai|expert FIG. 01